Scenario

I am attempting to implement supervised learning over a data set within a Java GUI application. The user will be given a list of items or ‘reports’ to inspect and will label them based on a set of available labels. Once the supervised learning is complete, the labelled instances will then be given to a learning algorithm. This will attempt to order the rest of the items on how likely it is the user will want to view them.

To get the most from the user’s time I want to pre-select the reports that will provide the most information about the entire collection of reports, and have the user label them. As I understand it, to calculate this, it would be necessary to find the sum of all the mutual information values for each report, and order them by that value. The labelled reports from supervised learning will then be used to form a Bayesian network to find the probability of a binary value for each remaining report.

Example

Here, an artificial example may help to explain, and may clear up confusion when I’ve undoubtedly used the wrong terminology 🙂 Consider an example where the application displays news stories to the user. It chooses which news stories to display first based on the user’s preference shown. Features of a news story which have a correlation are country of origin, category or date. So if a user labels a single news story as interesting when it came from Scotland, it tells the machine learner that there’s an increased chance other news stories from Scotland will be interesting to the user. Similar for a category such as Sport, or a date such as December 12th 2004.

This preference could be calculated by choosing any order for all news stories (e.g. by category, by date) or randomly ordering them, then calculating preference as the user goes along. What I would like to do is to get a kind of “head start” on that ordering by having the user to look at a small number of specific news stories and say if they’re interested in them (the supervised learning part). To choose which stories to show the user, I have to consider the entire collection of stories. This is where Mutual Information comes in. For each story I want to know how much it can tell me about all the other stories when it is classified by the user. For example, if there is a large number of stories originating from Scotland, I want to get the user to classify (at least) one of them. Similar for other correlating features such as category or date. The goal is to find examples of reports which, when classified, provide the most information about the other reports.

Problem

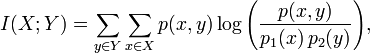

Because my math is a bit rusty, and I’m new to machine learning I’m having some trouble converting the definition of Mutual Information to an implementation in Java. Wikipedia describes the equation for Mutual Information as:

However, I’m unsure if this can actually be used when nothing has been classified, and the learning algorithm has not calculated anything yet.

As in my example, say I had a large number of new, unlabelled instances of this class:

public class NewsStory {

private String countryOfOrigin;

private String category;

private Date date;

// constructor, etc.

}

In my specific scenario, the correlation between fields/features is based on an exact match so, for instance, one day and 10 years difference in date are equivalent in their inequality.

The factors for correlation (e.g. is date more correlating than category?) are not necessarily equal, but they can be predefined and constant. Does this mean that the result of the function p(x,y) is the predefined value, or am I mixing up terms?

The Question (finally)

How can I go about implementing the mutual information calculation given this (fake) example of news stories? Libraries, javadoc, code examples etc. are all welcome information. Also, if this approach is fundamentally flawed, explaining why that is the case would be just as valuable an answer.

PS. I am aware of libraries such as Weka and Apache Mahout, so just mentioning them is not really useful for me. I’m still searching through documentation and examples for both these libraries looking for stuff on Mutual Information specifically. What would really help me is pointing to resources (code examples, javadoc) where these libraries help with mutual information.

I am guessing that your problem is something like…

“Given a list of unlabeled examples, sort the list by how much the predictive accuracy of the model would improve if the user labelled the example and added it to the training set.”

If this is the case, I don’t think mutual information is the right thing to use because you can’t calculate MI between two instances. The definition of MI is in terms of random variables and an individual instance isn’t a random variable, it’s just a value.

The features and the class label can be though of as random variables. That is, they have a distribution of values over the whole data set. You can calculate the mutual information between two features, to see how ‘redundant’ one feature is given the other one, or between a feature and the class label, to get an idea of how much that feature might help prediction. This is how people usually use mutual information in a supervised learning problem.

I think ferdystschenko’s suggestion that you look at active learning methods is a good one.

In response to Grundlefleck’s comment, I’ll go a bit deeper into terminology by using his idea of a Java object analogy…

Collectively, we have used the term ‘instance’, ‘thing’, ‘report’ and ‘example’ to refer to the object being clasified. Let’s think of these things as instances of a Java class (I’ve left out the boilerplate constructor):

The usual terminology in machine learning is that e1 is an example, that all examples have two features f1 and f2 and that for e1, f1 takes the value ‘foo’ and f2 takes the value ‘bar’. A collection of examples is called a data set.

Take all the values of f1 for all examples in the data set, this is a list of strings, it can also be thought of as a distribution. We can think of the feature as a random variable and that each value in the list is a sample taken from that random variable. So we can, for example, calculate the MI between f1 and f2. The pseudocode would be something like:

mi = 0 for each value x taken by f1: { sum = 0 for each value y taken by f2: { p_xy = number of examples where f1=x and f2=y p_x = number of examples where f1=x p_y = number of examples where f2=y sum += p_xy * log(p_xy/(p_x*p_y)) } mi += sum }However you can't calculate MI between e1 and e2, it's just not defined that way.